Retrieval Augmented Generation (RAG) powered search

txtchat builds retrieval augmented generation (RAG) and language model powered search applications.

The advent of large language models (LLMs) has pushed a reimagination of search. LLM-powered search can do more. Instead of just bringing back results, search can now extract, summarize, translate and transform content into answers.

txtchat adds a set of intelligent agents that are available to integrate with messaging platforms. These agents or personas are associated with an automated account and respond to messages with AI-powered responses. Workflows can use large language models (LLMs), small models or both.

txtchat is built with Python 3.8+ and txtai.

The easiest way to install is via pip and PyPI

pip install txtchat

You can also install txtchat directly from GitHub. Using a Python Virtual Environment is recommended.

pip install git+https://github.com/neuml/txtchat

Python 3.8+ is supported

See this link to help resolve environment-specific install issues.

txtchat is designed to and will support a number of messaging platforms. Currently, Rocket.Chat is the only supported platform given it's ability to be installed in a local environment along with being MIT-licensed. The easiest way to start a local Rocket.Chat instance is with Docker Compose. See these instructions for more.

Extending txtchat to additional platforms only needs a new Agent subclass for that platform.

A persona is a combination of a chat agent and workflow that determines the type of responses. Each agent is tied to an account in the messaging platform. Persona workflows are messaging-platform agnostic. The txtchat-persona repository has a list of standard persona workflows.

- Wikitalk: Chat with Wikipedia

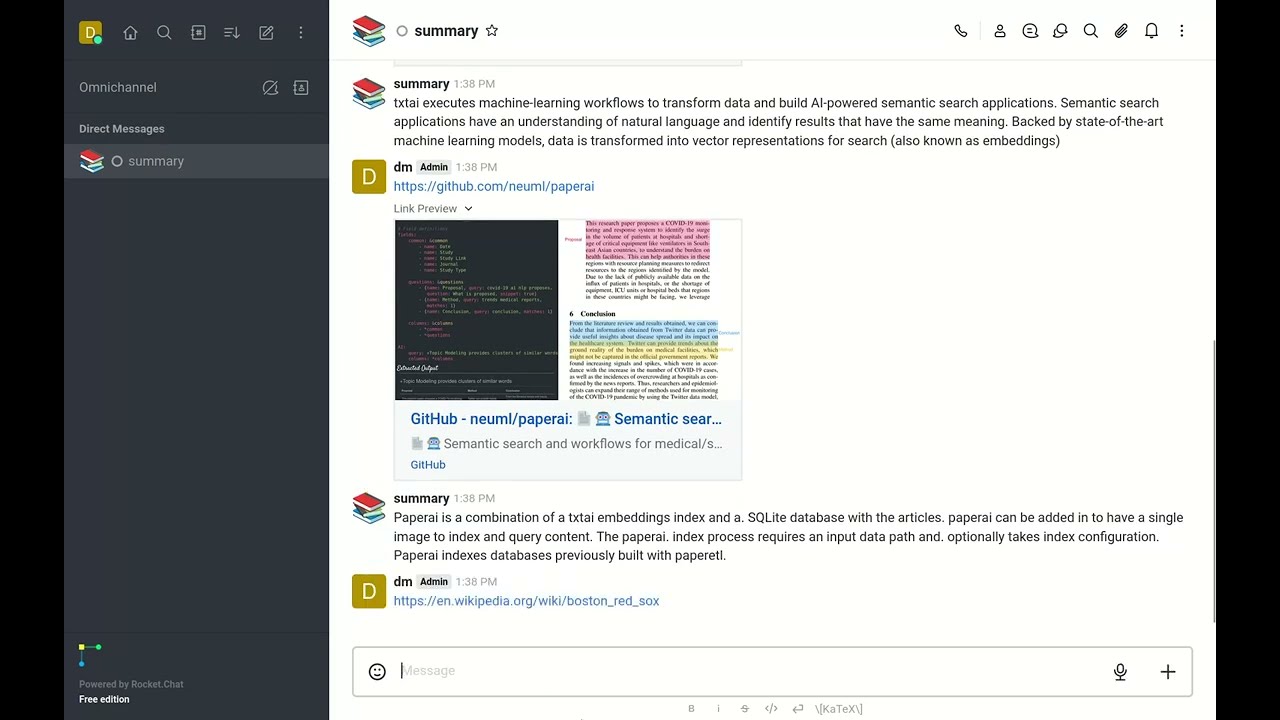

- Summary: Reads input URLs and summarizes the text

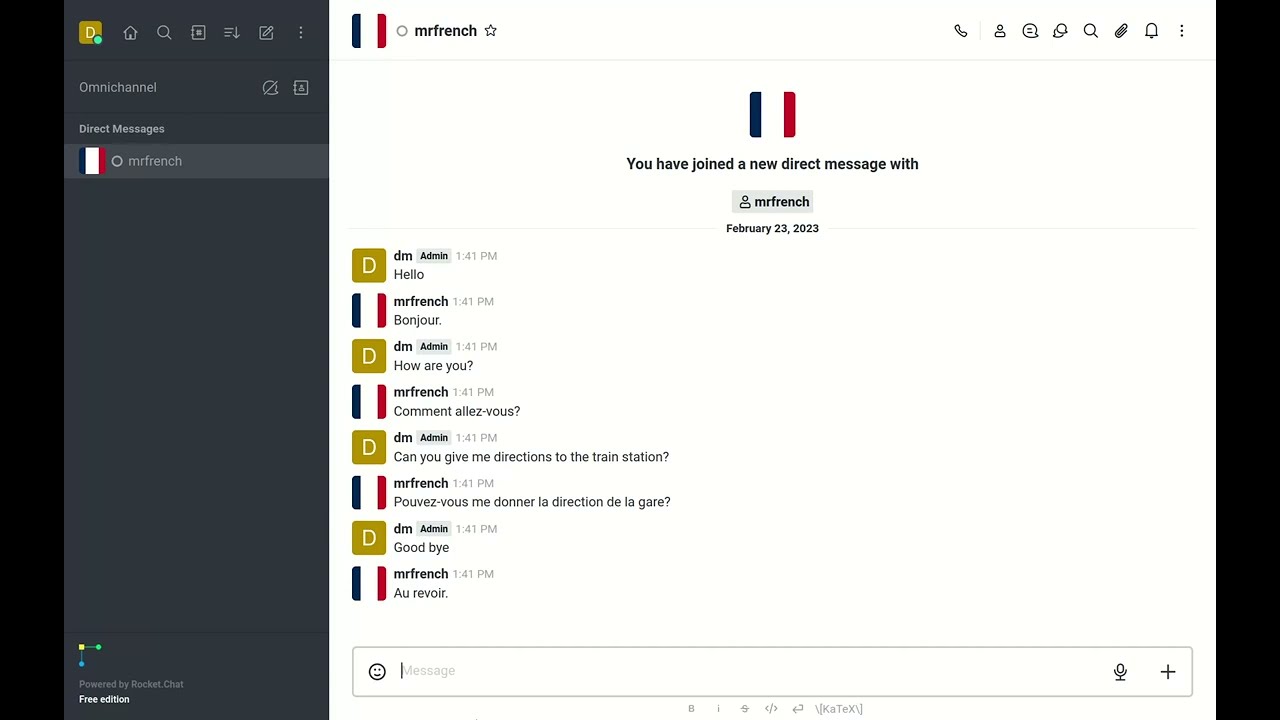

- Mr. French: Translates input text into French

See the examples directory for additional persona and workflow configurations.

The following command shows how to start a txtchat persona.

# Set to server URL, this is default when running local

export AGENT_URL=ws://localhost:3000/websocket

export AGENT_USERNAME=<Rocket Chat User>

export AGENT_PASSWORD=<Rocket Chat User Password>

# YAML is loaded from Hugging Face Hub, can also reference local path

python -m txtchat.agent wikitalk.yml

Want to add a new persona? Simply create a txtai workflow and save it to a YAML file.

The following is a list of YouTube videos that shows how txtchat works. These videos run a series of queries with the Wikitalk persona. Wikitalk is a combination of a Wikipedia embeddings index and a LLM prompt to answer questions.

Every answer shows an associated reference with where the data came from. Wikitalk will say "I don't have data on that" when it doesn't have an answer.

Conversation with Wikitalk about history.

Talk about sports.

Arts and culture questions.

Let's quiz Wikitalk on science.

Not all workflows need a LLM. There are plenty of great small models available to perform a specific task. The Summary persona simply reads the input URL and summarizes the text.

Like the summary persona, Mr. French is a simple persona that translates input text to French.

Want to connect txtchat to your own data? All that you need to do is create a txtai workflow. Let's run through an example of building a Hacker News indexing workflow and a txtchat persona.

First, we'll define the indexing workflow and build the index. This is done with a workflow for convenience. Alternatively it could be a Python program that builds an embeddings index from your dataset. There are over 50 example notebooks covering a wide range of ways to get data into txtai. There are also example workflows that can be downloaded from in this Hugging Face Space.

path: /tmp/hn

embeddings:

path: sentence-transformers/all-MiniLM-L6-v2

content: true

tabular:

idcolumn: url

textcolumns:

- title

workflow:

index:

tasks:

- batch: false

extract:

- hits

method: get

params:

tags: null

task: service

url: https://hn.algolia.com/api/v1/search?hitsPerPage=50

- action: tabular

- action: index

writable: trueThis workflow parses the Hacker News front page feed and builds an embeddings index at the path /tmp/hn.

Run the workflow with the following.

from txtai.app import Application

app = Application("index.yml")

list(app.workflow("index", ["front_page"]))Now we'll define the chat workflow and run it as an agent.

path: /tmp/hn

writable: false

extractor:

path: google/flan-t5-xl

output: flatten

workflow:

search:

tasks:

- task: txtchat.task.Question

action: extractorpython -m txtchat.agent query.yml

Let's talk to Hacker News!

As you can see, Hacker News is a highly opinionated data source!

Getting answers is nice but being able to have answers with where they came from is nicer. Let's build a workflow that adds a reference link to each answer.

path: /tmp/hn

writable: false

extractor:

path: google/flan-t5-xl

output: reference

workflow:

search:

tasks:

- task: txtchat.task.Question

action: extractor

- task: txtchat.task.Answer